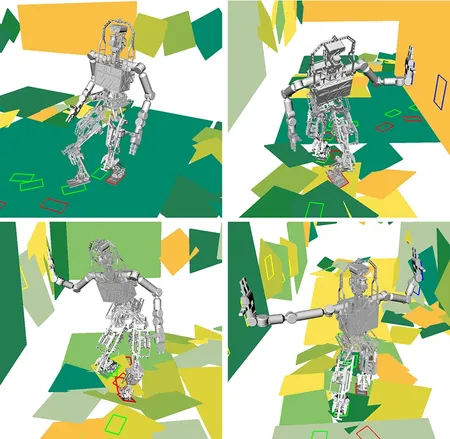

Robotic systems can process sensor data, such as from images and video, more diligently than humans. To take full advantage of this fact, researchers are working to turn a wide array of sensor data into models that allow a robot to better understand actions, events, and environments around it.

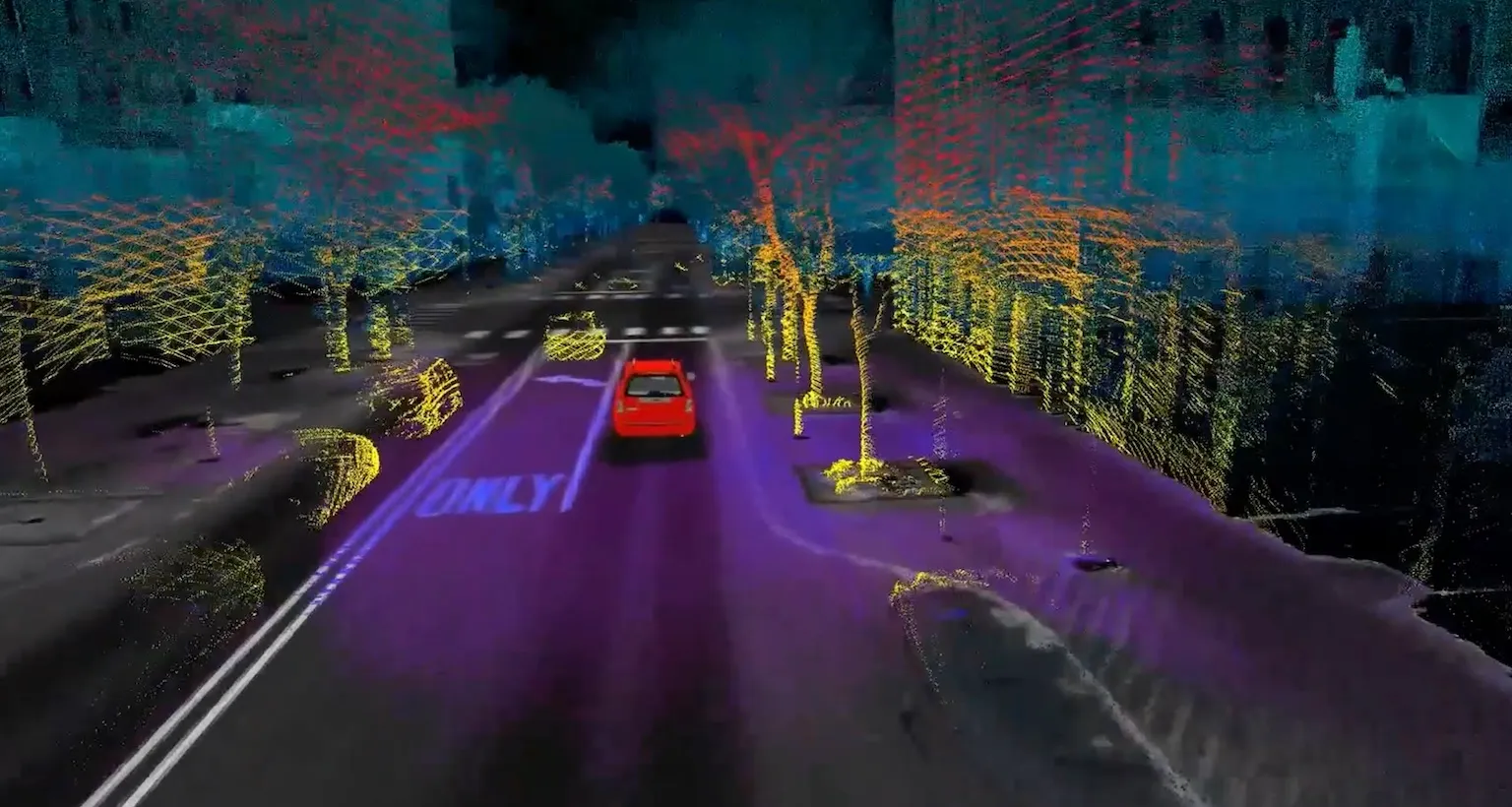

While traditional computer vision often relies on RGB cameras, Michigan faculty leverage suites of sensors, including LiDAR, sonar, ground-penetrating radar, thermal cameras, and even touch sensors to enable operation in conditions where vision often fails. The focus is on moving from raw data to actionable understanding of actions, events, and environments.

Researchers allow robots to categorize terrain or objects, like identifying shipwrecks in sonar or surface types for planetary rovers, while simultaneously performing Simultaneous Localization and Mapping (SLAM). By looking at both physical models of perception and results from deep neural networks, researchers are developing machines that can react appropriately to new phenomena, even with little explanation beforehand.

This work can also involve using Neural Representations and Neural Radiance Fields (NeRF) to create three-dimensional scenes of complex environments like the sea floor or dense urban streets. Related research allows information learned from one sensor type, like a camera, to enhance the performance of another, such as radar, improving how a robot detects objects and navigates in the field.

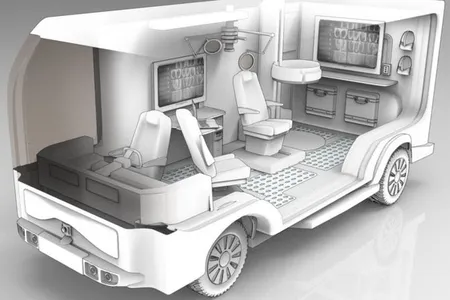

This research extends into healthcare and biology, where perception is used to help doctors diagnose and treat patients, and help ecologists monitor natural ecosystems. For example, faculty are developing systems for robotic eye imaging to automate disease screening and guide surgery. In the natural sciences, computer-vision object tracking is applied to analyze dolphin kinematics and habitat use and also forestry surveys to monitor tree health and fire risk.

Advances in computer vision at Michigan led to the founding of Voxel51, a leading visual AI and computer vision data platform. The company allows others to curate their data, generate annotations of samples, and evaluate models across scenarios to improve accuracy and save development time. As computer vision is a foundational technology, the applications are vast: precision agriculture and livestock management, autonomous driving, aviation inspections, and even helping coaches and players in a variety of sports.