When at a crosswalk, humans can easily read a driver’s slightest nod. These gestures give us the confidence to step out into the road full of two-ton machines. With an automated vehicle, however, that human to human communication is unreliable: the driver may not be in control or even be paying attention, leaving the pedestrian unsure if they’ll be safe while crossing.

To inform future solutions to this, a team led by Michigan researchers observed how we act as pedestrians in a virtual reality city full of autonomous vehicles.

“Pedestrians are the most vulnerable road users,” said Suresh Kumaar Jayaraman, a PhD student in mechanical engineering. “If we want wide-scale adoption of autonomous vehicles, we need those who are inside and outside of the vehicles to be able to trust and be comfortable with a vehicle’s actions.”

“In this study, we looked at how different driving behaviors affect pedestrians’ trust in the autonomous vehicle systems,” said Lionel Robert, associate professor in the School of Information and core faculty in U-M’s Robotics Institute.

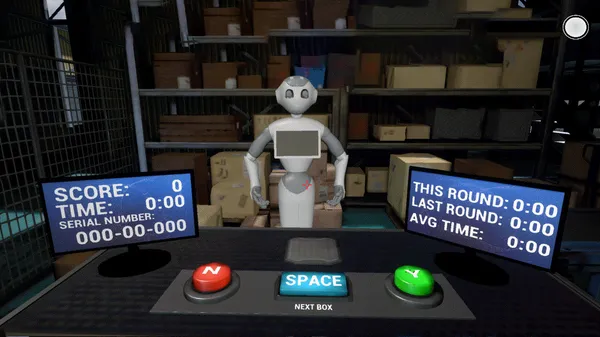

The team put study participants in a unique virtual reality setup, equipped with an omnidirectional treadmill, typically used for gaming, that allows walking in any direction. The participants were tasked with moving three balls, one at a time, from one side of a street to another as simulated autonomous vehicles zipped by. They performed this task using two different types of crosswalks, one with and one without a signal. The team also varied the cars’ behaviors during each trial, from aggressive to normal to defensive, by changing the vehicles’ speeds and stopping characteristics.

After each trial, participants were asked how much they trusted each vehicle.

“What we found, unsurprisingly, is that pedestrians trusted vehicles that adopted the slower, more defensive driving style,” said Robert.

Aggressive driving, on the other hand, lowered trust ratings.

“However, if there was a traffic signal at the crosswalk, the driving behavior did not really matter,” said X. Jessie Yang, assistant professor of industrial and operations engineering.

“Pedestrians considered the traffic signal a higher authority, and expected the vehicles to stop no matter how aggressive the automated vehicle was driving.”

The team also discovered that higher trust caused pedestrians to jaywalk more, move more closely to the cars as they crossed, and look less at oncoming cars.

“This is the issue of overtrust, which might lead to accidents or injury,” Jayaraman said.

To the researchers, there’s an optimum level of pedestrian trust that makes pedestrians feel comfortable to cross roads, while not so comfortable as to encourage risky behavior. Future automated cars could adjust their driving behavior to hit this right amount of trust.

In addition, the study’s findings could help cars better predict a pedestrian’s actions by using behavior such as eye contact as a proxy to measure trust.

A paper on the work titled “Pedestrian Trust in Automated Vehicles: Role of Traffic Signal and AV Driving Behavior,” is published in Frontiers in Robotics and AI.

Additional authors on the paper include: Chandler Creech, former graduate student of electrical and computer engineering; Dawn Tilbury, professor of mechanical engineering; Anuj Pradhan, professor of mechanical and industrial engineering at the University of Massachusetts Amherst; and Katherine Tsui, Toyota Research Institute.

The research was supported in part by the Toyota Research Institute and the National Science Foundation.